How we created k6 - the engineering story.

As mentioned in a previous blog post, we here at Load Impact had realized there were no load testing tools suitable for developers; Most of them were either too simple and limited, like ApacheBench, or they were old, large and clunky GUI applications with little appeal to developers, like JMeter or Load Runner. The end result of this lack of suitable tools was that developers have not been doing much load testing at all. Given the complex-to-learn and -use tools it hasn’t been worth the effort for them.

We wanted to change this, and at the same time build a tool we could use ourselves in our online load testing service, so we sat down and thought about what a good, modern load testing tool for developers should look like, and then tried to come up with a design that matched those criteria. This article explains our reasoning, the conclusions and the decisions we made.

In our view, a good load testing tool for developers should be:

Scriptable in a popular, high-level language

So that a large portion of developers would feel at home immediately and not have to learn much that was new to them, while still giving them the power and flexibility they need. They can write test cases in real code, not some limited DSL.

High-performing

So that it could be run on limited hardware (e.g. a laptop) and still be useful.

Written in a popular language

So that we might get community contributions in the open source project.

Suitable for automation

Automation-friendly scripting API, pass/fail results, standardized output format that can be parsed by a computer, etc.

We also have some requirements that probably do not affect the design of the tool, such as:

It should be an open source project

Developers these days expect open source and many shy away from tools that do not publish their source code, or even from tools without an open source license acceptable to them. We also believe that having the code out in the open makes the tool better, plus it may encourage more help from, and interaction with, the community.

So what do these criteria mean for technology choices and application design then? What language would the tool be written in? What scripting language would it support? What would the scripting API look like? The command-line interface? The results output?

Development language

We wanted the language we developed the tool in to be:

- High-performing

- Popular, with a reasonably sized community

- Supported on the most common platforms (Linux/*nix, MacOS, Windows)

- As high-level as possible, ensuring high productivity when developing the tool

We considered a number of languages initially, including C, C++, Rust, Go and even Swift. At first, we wanted the language to not use garbage collection, because that usually involves stop-the-world pauses that can mess up performance measurements - Measuring things correctly is of course important for a combined load generation and measurement tool. This meant that Java was out, as was Go and some other languages. Then at some point, our main developer Emily mentioned that she had read somewhere about a guy who knew someone whose best friend’s cousin had said they believed GC pauses in Go were exceedingly short, so (luckily) we decided to throw it into the mix regardless, and see what came out of it.

Language popularity

The next point to consider was language popularity, and we did a small, unscientific survey of the most popular Github projects, to see both what languages these projects were using, and get a feel for what kind of projects different languages tended to be used in. Hopefully, this will not turn into a language war, but what we found was, roughly, that:

- C was used for a variety of different software projects, weighted towards executable applications/utilities.

- C++ was used a lot to build databases, libraries and languages.

- Go was used for applications, developer tools and databases.

- Rust was used mainly to build Rust developer infrastructure.

- Swift was used mainly to build libraries, with a focus on UI libraries.

Remember, that we’re not claiming that e.g. Swift is used only to build UI libraries. We’re just saying that our little survey found that on Github, the most popular projects in Swift were UI libraries. We’d prefer to use a language that is commonly used for the same kind of thing we want to build, because that means there are developers out there using this language to do roughly the same thing. These developers may want to contribute to our project, and they are also potential users of the tool.

General activity in the Swift community on Github seemed quite low - few popular open source projects were written in Swift, as far as we could see, and those that were tended to be UI libraries mostly. We discarded Swift first, due to these reasons.

Rust activity was higher, but it still seems small and a bit niched. Most of the things built in Rust that are open sourced on Github tended to be infrastructure supporting Rust development.

C, of course, has a sizeable community. As has C++, so both those were still in the race. And Go seems to have a lot of momentum. Several very popular open source projects are written in Go, despite the fact that it’s a very young language.

C, C++ or Go?

C is a commonly used language for high-performance applications and lots of tools (measurement and other types) are written in C. Our previous load generator was a C application, for instance. One negative aspect with C that is hard to argue against, however, is the fact that C is not the most productive language in existence. Developing in C can be slow going and bug-prone, and we did want a language that made us productive. C is also an old language that may not be as appealing to many younger developers out there, or so we thought at least.

C++, on the other hand, has all the power of C, but with more complexity and more ways of doing things. It is interesting to note that few executable command-line applications seem to be built in C++. It feels like it is more used for larger, more complex projects, and maybe it is better suited for those kinds of projects. It is not as old as C, but neither is it as young and vital as Go. C++ doesn’t feel like a language used for "new, cool things”.

Go then, finally, is a very up-and-coming language. It definitely has a momentum and is seen as something to build "new, cool things” with. Lots of new tools are built in Go and the DevOps crowd seems to love it. Initially we had "No garbage collect” as a requirement, and Go does use garbage collection, but we dropped that requirement when we realized how small an impact the GC in Go actually has on execution (GC pauses in Go are very, very short). We felt that Go had none of the drawbacks of C and C++ and added some upside also, in the form of very good concurrency support, so in the end we chose Go. We started out by implementing something very simple in Go, to get a feel for how well suited the language was for this type of application. It felt like a perfect match, so we decided to go with Go!

In my own, personal opinion Go is a really nice language, with both high-level and low-level features. The thing I like best about it, however, and which makes it very different from e.g. C++, is how small it is. You can learn the basics and become productive in no time at all. Sure, to write really good code takes time to learn like with any language, but the initial barrier to getting started is very low indeed.

Furthermore, it is fast, has good concurrency support which is a bonus. It is supported on multiple platforms, there are tons of libraries for all sorts of things and its momentum is huge at the moment. It is unlikely to disappear for a long, long time.

Scripting language

What about the scripting language then? A modern load testing tool for developers have to allow users to write their test cases in real code, so a scripting language is necessary. Our current platform used Lua as the scripting language. Lua is a great fit for a load testing tool because the LuaJIT VM is very fast and resource efficient, which means we can simulate many virtual users on limited hardware. Lua is also a user-friendly, modern, high-level language. The main drawback with Lua is that it is still somewhat exotic. It seems to be gaining traction, but most people out there today have never used Lua and there are not so many third-party libraries etc. available.

We decided that the main criteria for the scripting language was that it should be popular and well-known among developers. A secondary wish was that it should be high-performing. There really didn’t seem to be much choice, and we chose JavaScript because of its ubiquity - most developers have some knowledge of JavaScript. Unfortunately, JavaScript is not known to perform very well and we had some initial issues with a couple of Golang JS engines, getting them to perform well. Then we found goja which today makes k6 perform on par with the better load testing tools out there.

Scripting API

We also needed a scripting API, with load test- and test-specific functionality we could expose to the JavaScript script code.

Our main developer (and k6 maintainer) Emily came up with what we think is a very nice and developer-friendly API where you have a couple of constructs called "groups”, "checks” and "thresholds” that together form a very powerful, flexible and (we think) user-friendly API for both functional and performance testing.

I could spend an entire blog article trying to explain how these works, but it is probably better with a code snippet:

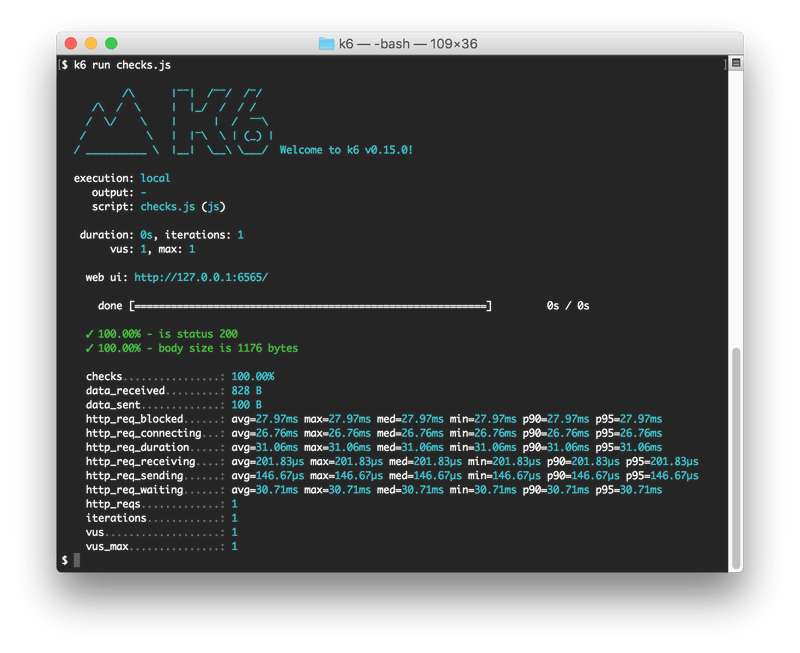

The above load test configuration, when used by k6, will result in a 10-second load test that simulates 10 concurrent, virtual users (VUs). The virtual users will load two separate "web pages” consisting of multiple URLs that are fetched (only 2 URLs per page here though, as this is an example) as part of the page load. The whole load test will generate a PASS result (that a CI server can interpret) if all the following criteria are fulfilled:

- the 50th percentile of the HTTP request duration for all requests is below 100 ms

- the 99th percentile of the HTTP request duration for all requests is below 150 ms

- the average time taken to load the page called "page1” stays below 500 ms

- 95% or more of all the check statements used in both pages were true (the check statements verify that individual transactions are successful)

If any one of those four criteria fails, k6 will generate a FAIL result (exit with a non-zero exit code) that a CI system will interpret as a failed test.

Combined, the groups, checks and thresholds functions allow for very flexible and powerful testing, making k6 a quite competent functional testing tool that also happens to support load testing!

Command-line interface

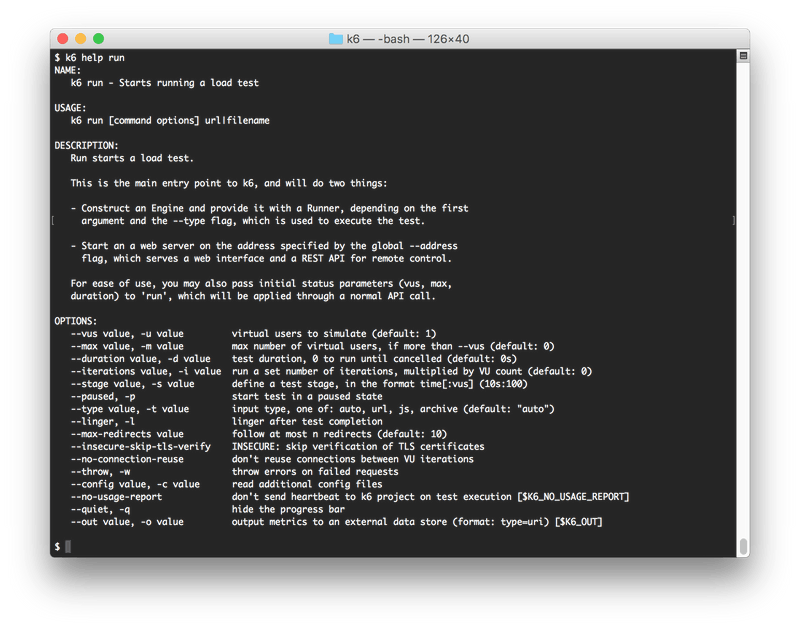

Many old load testing tools have little or no command-line interface to speak of. Instead you have a lot of functionality that is only available via some GUI. This is not acceptable to developers, and to anyone who wants to automate things, so one key requirement for k6 was that it should have a well thought out command line interface (CLI).

We decided to base the CLI on https://github.com/urfave/cli - a library that helps implement modern, self-documented (integrated help) and consistent CLIs. All functionality in k6 can be reached through a combination of a) command-line options and b) the JavaScript script file that the virtual users in the load test execute. The built-in help functionality allows you to explore k6 iteratively from the command line. Many people will probably not have to resort to reading the online documentation for their first few sessions with k6, but can learn to use it via the command line and looking at code examples.

But the command line of k6 is another topic that deserves a whole separate blog post, so I think I’ll just stop here for now.

Results output

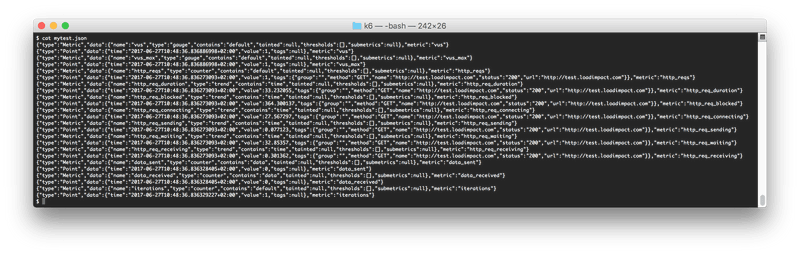

By default, k6 will output some, hopefully useful, statistics on stdout. You can of course affect what is printed, and also print arbitrary text to stdout if you want, but for purposes of automating tests, or for storing test results in some more structured format, there are a couple of interesting output options available:

- Store detailed results as a JSON fileJSON is, of course, a very widely used format and storing your results as JSON data makes it easier to parse them using some script in an automated test setting, or to import them into some other tool for analysis

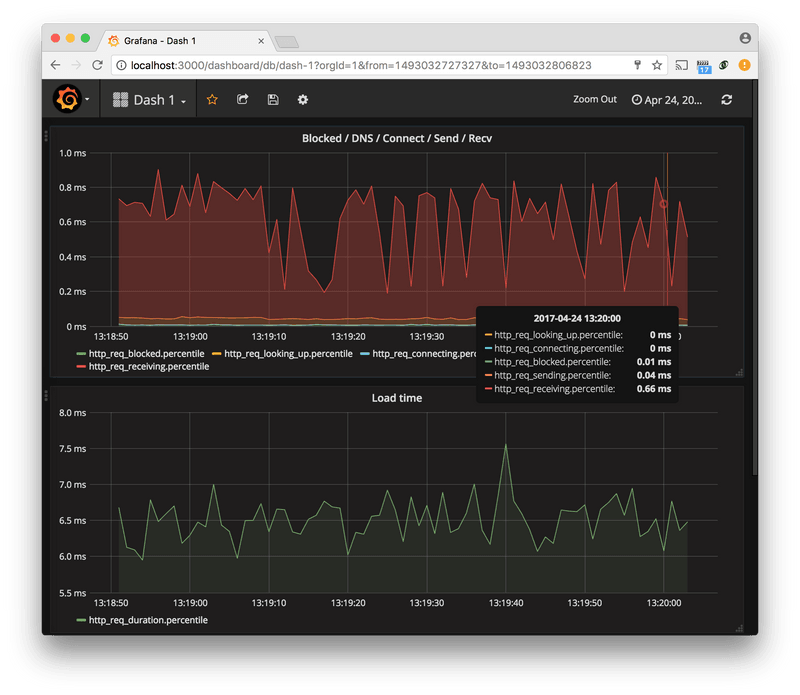

- Send detailed results to an InfluxDB instanceHere, results are streamed in real time (live streaming) to the InfluxDB database, which allows you to create setups where you can monitor your test in real time. InfluxDB is a quite popular time-series database that works well with a lot of other tools for data storage/management/analysis. A popular setup for dealing with k6 test results is to store results on an InfluxDB instance and then use Grafana to visualize results from the database

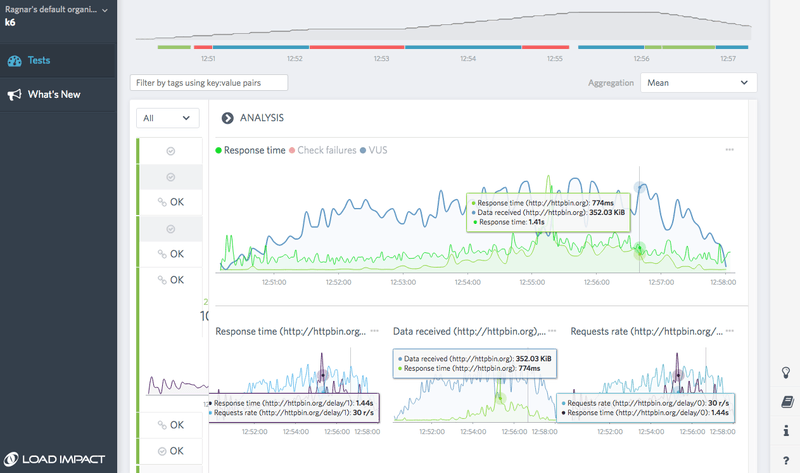

- Send detailed results to Load Impact InsightsLoad Impact Insights is a managed, online service to which you can stream your load tests results in real time, just like with InfluxDB. This allows you to closely monitor your test and see what happens, as it happens. Insights stores your test results, offers test result trending, collaboration and advanced analysis features. It has both a free tier and a couple of premium tiers

Wrapping up

We believe k6 is the best load testing tool available today for developers who want to automate any part of their load testing.

We also believe k6 may be the key to the "holy grail” problem of combining load testing and functional testing. I.e. writing one test case and executing it both as a functional test and as a load test. Today, doing both kinds of testing usually means creating and maintaining two sets of test cases/configurations. However, the design of k6, and especially its scripting API, was from the start meant to support both functional testing and load testing using the same tool, so k6 has the capability to bridge these two worlds and we are very excited to see if and how people will do this.